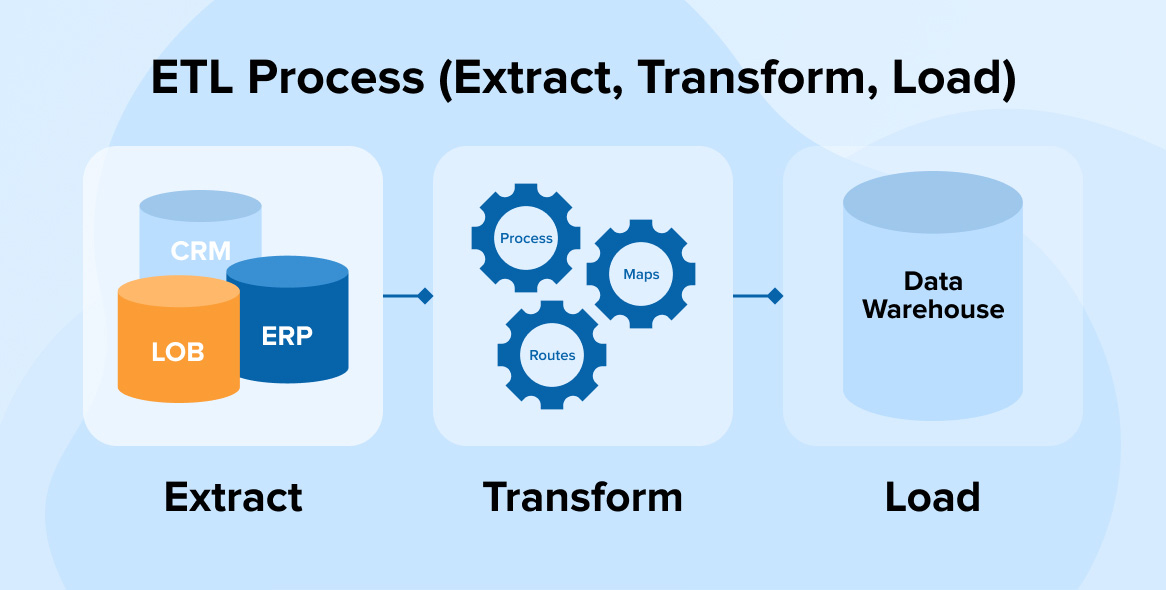

In this case change data detection occurs during Load. All the data in the source entity is extracted. Several patterns for Extract can be employed: For the purposes of the Data Warehouse, the Landing Data Store is transient, meaning it doesn’t persist the data between ETL batch runs. Conceptually, because of it’s file based nature, the Landing data store could be the raw data layer of a Data Lake. The data is written to a Landing data store, typically a local or cloud based file system ready for loading into the Staging/Persistent Layer. Source systems can include Databases, Text Files, Excel spread sheets, or any other kind of source data. The extract process pulls data from a source system, usually on a nightly basis. This borrows heavily from the Kimball methodology, but also incorporates our learning over many data warehouse implementations, the advent of the persistent staging concept, and the advent of Cloud based Data Warehouse solutions. The diagram below describes the ETL and Data stores utilized by Dimodelo Data Warehouse Studio when generating a Data Warehouse solution. There are as many ways to design ETL as their are designers. ELT also has the advantage of keeping large amounts of historical unprocessed data on hand ready for the day it may be needed for new analysis. ELT asks less of remote sources, requiring only their raw and unprepared data.ĮLT is gaining popularity because of the exponential growth of high scale processing power with database platforms themselves, like MPP databases, Big Data Clusters etc. The transformation of data, in an ELT process, happens within the target database. ETL vs ELTĮxtract Transform/load (ETL) is an integration approach that pulls information from remote sources, transforms it into defined formats and styles, then loads it into databases, data sources, or data warehouses.Įxtract/load/transform (ELT) similarly extracts data from one or multiple remote sources, but then loads it into the target data warehouse without any other formatting. That meta data can then be used to generate code for a variety of evolving platforms and technologies. It captures meta data about you design rather than code. Dimodelo Data Warehouse Studio is a Meta Data Driven Data Warehouse tool. It comes with Data Architecture and ETL patterns built in that address the challenges listed above It will even generate all the code for you. ETL that worked on on-premise databases, won’t work for the cloud environment.ĭimodelo Data Warehouse Studio solves many of these issues for you. With the advent of the cloud, with a limited “pipe” between on-premise data sources and a cloud based data warehouse, and with different data load techniques targeting new technologies (Massive Parallel Processing Databases, Data Lakes, Big Data), the nature of ETL has changed significantly. In addition, ETL techniques are constantly changing. It can take several months at least to derive effective ETL patterns. Full,Partial or Incremental sources and joins across each source.Īnd that’s just the start.

Schema changes of Source and Target entities.Type 1 Only, Type 2 Only and Type 1 and 2 mixed Dimensions. Heterogeneous Source systems and Connectivity.Persisting data and keeping an accurate history.For example, how do you handle the following: But once you delve in to it, it becomes more difficult with lots of use cases that must be managed. It’s merely extracting data from one data source and inserting it into another. The “Develop a Data Warehouse with Dimodelo Data Warehouse Studio” course is an in-depth description of using Dimodelo Data Warehouse Studio to build a Data Warehouse and its ETL. This section will just provide an overview of ETL processes. You could write an entire book about ETL, and several people have including Kimball himself, including the 34 Subsystems of ETL. Up to 80% of your cost will be in developing the ETL.ĮTL is complex. Development of an ETL process is the major cost in delivering a Business Intelligence Solution. ETL stands for Extract, Transform and Load. It refers to the process of extracting data from the Source systems, transforming it into the star schema format and loading it into the relational Data Warehouse.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed